Towards a Digital Aesthetic

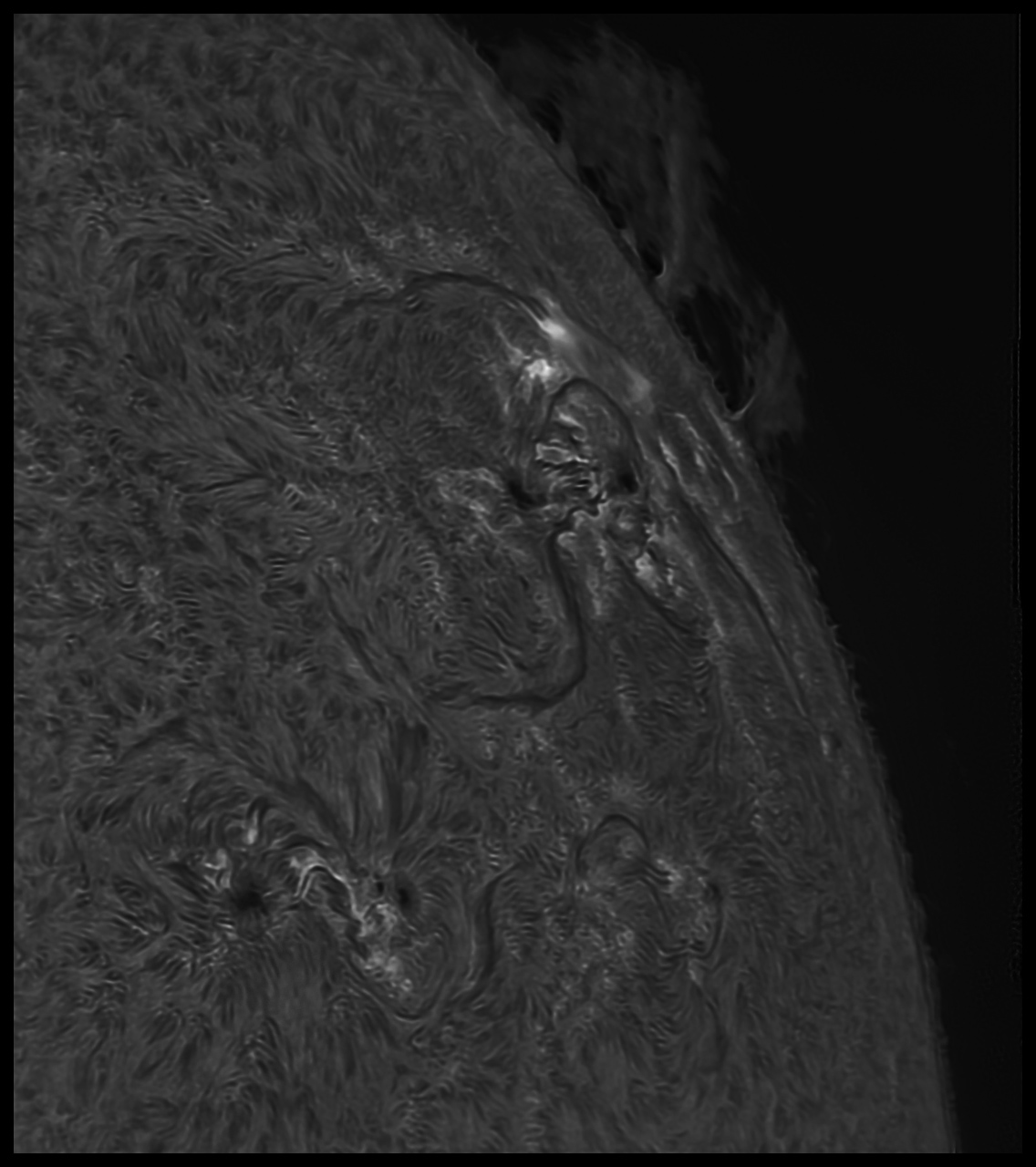

06/08/2024. A star on the face of the Sun. This week, the active region formerly known as AR3664 disappeared around the limb of the Sun, carried by the Sun's rotation. Yesterday, on the way out, it threw one more flare. For this photo, just as the flare began to ramp up, I "exposed for the highlights" and let the rest of the frame go dark. Nevermind that "the rest of the frame" was the blinding face of the noonday Sun.

Make it big; it's a good one.

-----

TMB92SS, Daystar Quark, ZWO ASI178MM, FireCapture 2.7, Autostakkart!4

816x910 ROI w/flatfield, 14-bit, binned 2x2, gamma 22, 1.4ms, best 1,000 of 5,000 frames, drizzled 3x.

Photoshop: dehaze, shadows boosted, noise-reduced, pseudo-flatted 65%.

ImPPG: L-R, 70 passes, ringing suppressed.

Photoshop: lightly unsharp masked, mildly noise-reduced to soften edges, resampled for the web.

One key to good finished tones in narrow-band solar imaging seems to be sticking to one or two sharpening algorithms. Resist the urge to throw the entire toolbox at any given image. It's as much the confusion of sharpening cues from different algorithms as their overuse, per se, that contributes to the harsh, damaged look of "over-processed" images. Especially resist the urge to render delicate detail vivid; it simply isn't. It is enough that fine detail is visible in the final photograph.

I'm not saying there aren't exceptions -- the only rule to which there are no exceptions is the one saying that there are always exceptions -- but in general, when it comes to this sort of imaging, try not to get any more elaborate with the toolbox than this:

- Use Photoshop to crop unstacked edges, to impose mild noise suppression, maybe indulge in just a tidge of dehaze and sometimes just a slight boost to shadow detail.

- The pseudoflat action is my new obsession. Give it a try before sharpening. Pull it back to 60-70% (layer, fade) to keep deep shadows behaving like deep shadows, but do whatever works. Sometimes leave this step off; sometimes use it at 100%.

- ImPPG seems able to produce better sharpness than SmartSharpen. Using ImPPG is a little more trouble since the image needs to be stored before being loaded into ImPPG and then saved again to resume processing elsewhere, but the extra steps often pay off. Within ImPPG, the rule seems to be to get it the way you want it, then back off just a bit. Take it easy.

- Photoshop again for polish (maybe a tiny bit of USM if you just can't resist), some noise reduction to soften things up some, lower contrast, resample, and final histogram tweaks. (See example above.)

Aggressive processing cannot save an essentially blurry photo. It can help out a lot, but at some point the game is over. Stop before you get to that point. Deep (tall?) stacks generally permit more aggressive processing than shallow (short) stacks. Expose left; the right edge of the histogram is unforgiving.

To avoid the need for desperate measures during processing, focus like a goddamn lunatic at the beginning. At the end, do not reach for detail beyond what the image and the instrumentation can delivery. Excessive plate scales, real or implied, are like heroic processing measures in that they don't usually help anything. Sampling at 3-4x the diffraction limit is more than enough to get all the details you're going to get. (If you want a lot of pixels to work with, drizzle during stacking and downsample for display; if you really want better resolution, use a bigger lens.) Being too aggressive at any particular step (or with all steps together) and working at excessive plate scales just makes plain the shortcomings of the capture rather than the details within it.

I've been playing with tons of old images captured with 1x1 binning and plenty of new ones at 2x2. I've taken to preserving each step along the way so I can try different workflows, pachinko-ing through several options looking for processes that will give me fine detail without artifact, subtle tones without artiface. The proposed wisdom up above is the best I've got so far. The broad sense that it is unwise to combine max entropy decon with SmartSharpen with Unsharp Masking, etc, etc, seems important. That way lies artifacts ("artificial sharpness"), and artifacts contribute, subtly or rudely, to the sense that the image has been overcooked, one way or another. Stick to one method -- consider very minor tweaking using others, but only very carefully and suspiciously. What is true of edge and transition processing may also be true of histogram processing -- keep it as straightforward as possible.

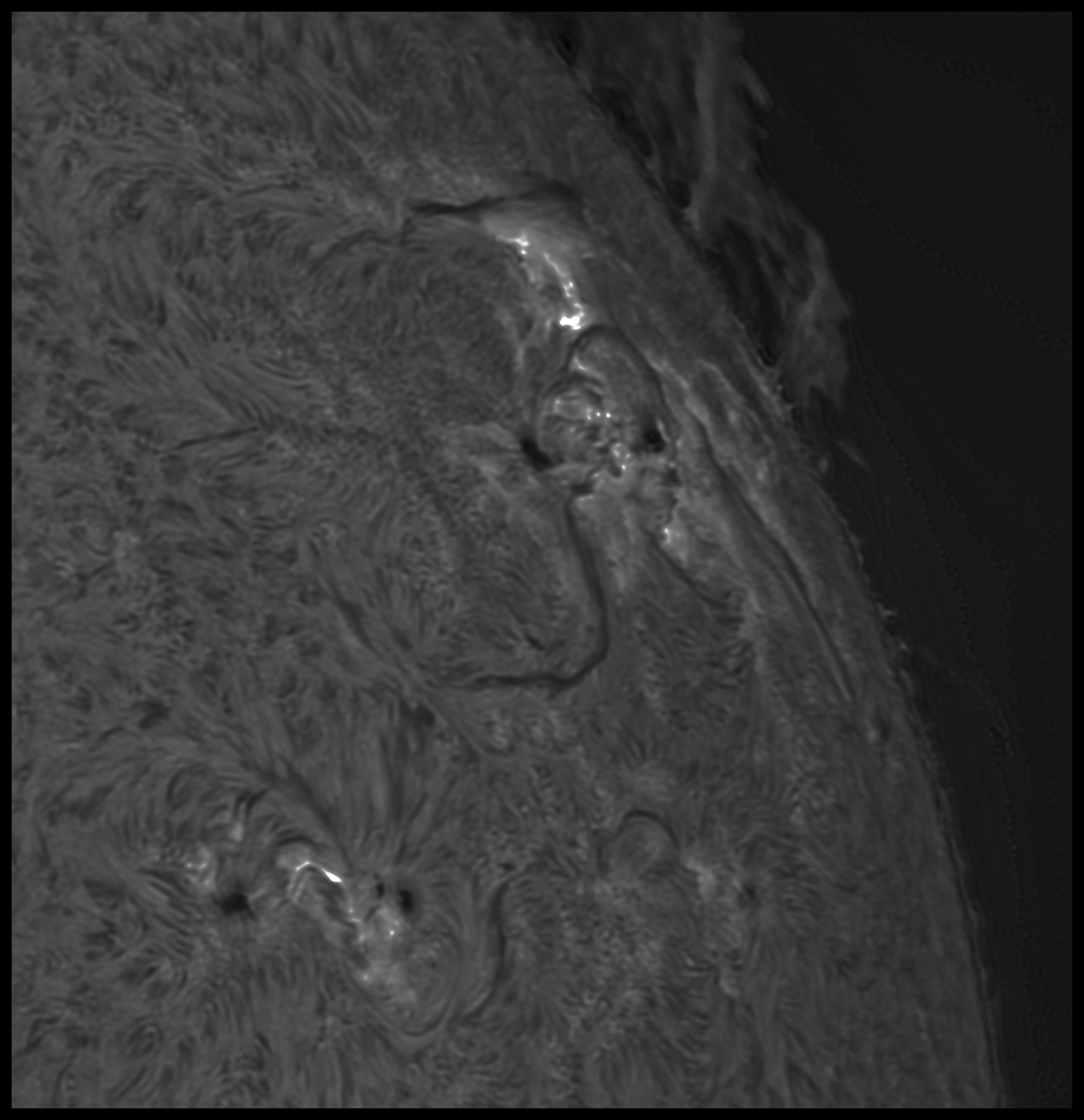

15 minutes later

:: top ::